For updated information on our work in this area please visit wit.to/Synthetic-Media-Deepfakes. This includes an updated version of this survey.

This is the second in a series of blogs on a new area of focus at WITNESS around the emerging and potential malicious uses of so-called “deepfakes” and other forms of AI-generated “synthetic media” and how we push back to defend evidence, the truth and freedom of expression. The first in the blog series can be accessed here.

This work kicked-off with an expert summit – the report on that is available here.

This work is embedded in a broader initiative focused on proactive approaches to protecting and upholding marginal voices and human rights as emerging technologies such as AI intersect with the pressures of disinformation, media manipulation, and rising authoritarianism.

In this blog, we survey the range of solution areas that have been suggested to confront the mal-uses of synthetic media. Our goal is to share a range of approaches that work at a range of scales, draw on a diversity of actors, address specific threat models and include legal, market, norms or code/technology-based approaches.

Solution space: Analogies and existing experiences we can learn from?

As part of a recent expert meeting, we reviewed a range of potential analogies that could shape thinking at this early stage in the discussion and that could help avoid the conversation being bound by one problem framing.

Potential analogies that could shape responses could include:

- Banknotes and anti-forgery? In this case, an institution makes it hard to create the notes and provides an easily accessible way for individuals and businesses to verify them.

- Legal documents and chain of custody? Established systems track content within a legal process.

- Spam detection and harm reduction? Including consideration of acceptable levels of false positives.

- Image detection and hashing? As used in policies and technologies deployed against violent extremism, copyright violations, and child exploitation imagery.

- Drug resistance? If we don’t come up with a common approach, it allows a threat actor to build skills and ability in a particular under-resourced context.

- Cyber-security? Suggesting that this will be a constant offense-defense, with use of blue and red-team testing on risk area.

- Asymmetrical warfare?

- Journalism and experience of verification of user-generated context? Including that open-source tools, widely shared can help build a strong community of practice around better verification.

- Human rights and particularly existing patterns of attacks on the basis of gender?

Potential approaches and pragmatic or partial (and not-so) solutions

We also looked at a range of potential solution approaches including:

Invest in media literacy and resilience for news consumers and invest in resiliency and discernment against disinformation

There is already an increase in funding and support for efforts to promote media literacy around disinformation both among educators, foundations and media outlets. These initiatives could further integrate commonsense approaches to spotting both individual items of synthetic media (e.g. via visible anomalies such as mouth distortion that are often present in current deepfakes) as well as developing approaches to assessing credibility more broadly and to supporting people on how to engage with this content. This article by Craig Silverman for Buzzfeed is an example noting some simple steps that could currently be taken to identify a deepfake at this point—some such as checking the source are common to other media literacy and verification heuristics, while advice to “inspect the mouth” and “slow it down” are specific to the current moment in deepfakes detection.

Other work will need to build on the growing body of research on social media and disinformation. A recent Harvard Business Review, article, “Big Idea on ‘Truth, Disrupted’,” provides a summary of recent research and approaches. More depths is provided in resources such as the Council of Europe’s “Information Disorder” report, the Social Science Research Council report on the state of the field in Social Media & Democracy, the recent scientific literature on “Social Media, Political Polarization, and Political Disinformation” and The Science of Fake News as well as the ongoing work of Data & Society on and First Draft. Research and responses lag significantly in how to deal with visual and audio information and in non-U.S. contexts.

Build on existing efforts in civic and journalistic education and tools for practitioners

There is a range of existing efforts in journalistic, human rights and OSINT discovery and verification efforts that support practitioners in those areas to better find, verify and present open-source information, including video, audio, and social media content. New approaches to recognizing and debunking deepfakes and synthetic media can be built into the toolkits, browser extensions, and industry training provided by First Draft, Google News Initiative and similar non-governmental and industry peers, as well as the efforts of groups like Bellingcat and WITNESS’ Media Lab and Video As Evidence efforts. They will undoubtedly also be integrated by industry leaders working in social media verification such as Storyful and the BBC.

Reinforce journalistic knowledge and enhanced collaboration around key events by supporting better collaboration on understanding and rapid identification and response in the journalism community

In response to misinformation threats, competing journalistic organizations have worked together around elections and other potential crises via initiatives such as Crosscheck and Verificado. In preparation for potential deepfake deployment that will try to target “weak links” in the information chain in upcoming elections in the U.S. and elsewhere, coalitions of news organizations working on shared verification can integrate an understanding of deepfakes and synthetic media, threat models and response approaches into their collaboration and planning as well as coordinate with researchers and forensic investigators.

Explore tools and approaches for validating, self-authenticating and questioning individual media items and how these might be mainstreamed into commercial capture/sharing tools

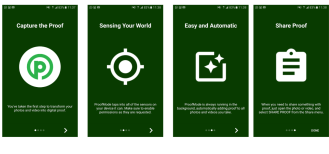

An increasing range of apps and tools seek to provide a more metadata-rich, cryptographically signed, hashed or otherwise verifiable image from point of capture. These include apps for journalists and human rights defenders such as ProofMode and commercial tools such as TruePic. A potential additive element here that a range of companies is exploring is the use of the blockchain as a distributed media ledger to track content and edits. The use and validation of ‘confirmed’ live video, recorded from a live broadcast, might also play a role. More whimsically certain creators could pursue a re-emphasis on analog media for trustworthiness.

WITNESS will be leading a baseline research project of how to understand the pros and cons of a range of optimal ways to track authenticity, integrity, provenance and digital edits of images, audio, and video from capture to sharing to ongoing use. We’ll focus on a rights-protecting approach that a) maximizes how many people can access these tools, b) minimizes barriers to entry and potential suppression of free speech without compromising right to privacy and freedom of surveillance c) minimizes risk to vulnerable creators and custody-holders and balances these with d) potential feasibility of integrating these approaches in a broader context of platforms, social media and in search engines. This research will reflect platform, independent commercial and open-source activist efforts, consider the use of blockchain and similar technologies, review precedents (e.g. spam and current anti-disinformation efforts) and identify pros and cons to different approaches as well as the unanticipated risks.

WITNESS will be leading a baseline research project of how to understand the pros and cons of a range of optimal ways to track authenticity, integrity, provenance and digital edits of images, audio, and video from capture to sharing to ongoing use. We’ll focus on a rights-protecting approach that a) maximizes how many people can access these tools, b) minimizes barriers to entry and potential suppression of free speech without compromising right to privacy and freedom of surveillance c) minimizes risk to vulnerable creators and custody-holders and balances these with d) potential feasibility of integrating these approaches in a broader context of platforms, social media and in search engines. This research will reflect platform, independent commercial and open-source activist efforts, consider the use of blockchain and similar technologies, review precedents (e.g. spam and current anti-disinformation efforts) and identify pros and cons to different approaches as well as the unanticipated risks.

Invest in rigorous approaches to cross-validating multiple visual sources

Approaches pioneered by groups like Situ Research with its Euro-Maidan Ukraine killings reconstruction, Forensic Architecture, Bellingcat and the New York Times Video Investigations Team utilize combinations of multiple cameras documenting an event as well as spatial analysis to create robust accounts for the public record or evidence. These approaches could overlap with improved tools for authenticating and ground-truthing eyewitness video, allowing for one authenticated video to anchor a range of other audiovisual content.

Invest in new forms of manual video forensics

As synthetic media advances, new forms of manual and automatic forensics could be refined and integrated into existing verification tools utilized by journalists and factfinders as well as potentially into platform-based approaches. These will include approaches that build on existing understanding of how to detect image manipulation and copy-paste-splice, as well as approaches customized to deepfakes such as the idea of making blood flow more visible via Eulerian video magnification with the assumption that natural pulse will be less visible in deepfakes (note: some initial research suggests this may not be the case).

The US government via its DARPA MediFor Program (as well as via media forensics challenges from NIST) continues to invest in a range of manual and automatic forensics approaches that include refinements on existing approaches for identifying paste and splice into images and tracking camera identities and fingerprints. Other approaches look for physical integrity (‘does it break the laws of physics’) issues such as ensuring there is not inconsistency in lighting, reflection and audio as well reviewing the semantic integrity of scenes and identifying image provenance and origins (pdf). Many are looking for additional new forms of neural network-based approaches described below.

The US government via its DARPA MediFor Program (as well as via media forensics challenges from NIST) continues to invest in a range of manual and automatic forensics approaches that include refinements on existing approaches for identifying paste and splice into images and tracking camera identities and fingerprints. Other approaches look for physical integrity (‘does it break the laws of physics’) issues such as ensuring there is not inconsistency in lighting, reflection and audio as well reviewing the semantic integrity of scenes and identifying image provenance and origins (pdf). Many are looking for additional new forms of neural network-based approaches described below.

Invest in new forms of deep learning-based detection approaches

New automatic GAN-based forensics tools such as FaceForensics generate fakes using tools like FakeApp and then utilize these large volumes of fake images as training data for neural nets that do fake-detection.

These and similar tools developed in programs like MediFor such as the use of neural networks to spot the absence of blinking in deepfakes (pdf) could be incorporated into key browser extensions or dedicated tools like InVid. They could also form part of a platform, social media networks and search engines’ approaches to identifying signs of manipulation. Platforms have access to significant collections of images (including increasingly, the new forms of synthetic media) and could collaborate on maintaining updated training data sets of new forms of manipulation and synthesis to best facilitate use of these tools. Platforms, as well as independent repositories such as the Internet Archive, also have significant databases of existing images that can form part of detection approaches based on image phylogeny and provenance that detect the use of elements of existing images via both neural networks and other approaches.

Track and identify malicious deepfakes and synthetic media activity via other signals of activity in the info ecosystem

As identified elsewhere, the best way to track deepfakes or other synthetic media may be to focus on real-time tools for sourcing enhanced bot activity, detecting darknet organizing or creating an early warning on coordinated state or para-statal action. Recent reporting from the Digital Disinformation Lab at the Institute for the Future and the Oxford Internet Institute, among others, explores the growing pervasiveness of these tactics but also signals of this activity that can be observed.

Identify, incentivize and reward high-quality information, and rooting out mis/mal/disinformation

A distributed approach could include analogies and lessons learned from cyber-security, for example, the use of an equivalent to a “bug bounty.”

Support platform-based approaches (social networks, video-sharing, search, and news) including many of the above elements

Platform collaboration could include detection and signaling of detection at upload, at sharing, or at search. They could include opportunities for cross-industry collaboration and a shared approach as well as a range of individual platform solutions from bans, to de-indexing or down-ranking, to UI signaling to users, to changes to terms-of-service (as for example with bans on deepfakes by sites such as PornHub or Gyfycat). Critical policy and technical elements here include how to distinguish malicious deepfakes from other usages for satire, entertainment and creativity, how to distinguish levels of computational manipulation that range from a photo taken with “portrait mode” to a fully engineered face transplant, and how to reduce false positives; and then how to communicate this to regular users as well as journalists and fact-finders. As Nick Diakopolous suggests, related to solutions around supporting journalism, if they ‘were to make media verification algorithms freely available via APIs, computational journalists could integrate verification signals into their larger workflows’.

Human rights and journalists’ experience with recent platform approaches to content moderation in the context of current pressures around ‘fake news’ and countering violent extremism—with Facebook in Myanmar/Burma and with YouTube’s handling of evidentiary content from Syria— highlights the need for extreme caution around approaches focused on takedowns of content. WITNESS’ recent submission to the United Nations Special Rapporteur on Freedom of Opinion and Expression highlights many of the issues we have encountered, and his report highlights steps companies should take to protect and promote human rights in this area.

In addition, there remain gaps in the tools available on platforms to enable solutions to other existing verification, trust and provenance problems around recycled, faked and other open-source images. One key recommendation out of the expert convening was that platforms, search and social media companies should prioritize development of key tools already identified in the OSINT human rights and journalism community as critical; particularly reverse video search.

Ensure commercial tools provide clear forensic information or watermarking to indicate manipulation

Companies such as Adobe producing consumer-oriented video, image, and audio manipulation tools have limited incentives to build counter-forensics measures into the outputs of their products since they are designed to be convincing to the human eye but not machines. There should be a unified consensus that consumer video and image manipulation should be machine forensics readable to the maximum extent possible, even if the manipulation is not visible to the naked human eye. Another approach would look at how to include new forms of watermarking – for example, as suggested by Hany Farid, to include an invisible signature to images created using Google’s TensorFlow technology, an open-source library used in much machine learning and deep learning work. Such approaches will not resolve the analog hole where a copy is created of a digital media item but might provide traces that could be useful to signal forensically many synthetic media items.

Protect individuals vulnerable to malicious deepfakes by investing in new forms of adversarial attacks

Adversarial attacks include invisible-to-the-human-eye pixel shifts or visible scrambler-patch objects in images that disrupt computer vision and result in classification failures. Hypothetically these could be used as a user or platform-lead approach to “pollution” of training data around specific individuals in order to prevent bulk re-use of images available on an image search platform (e.g. Google Images) as training data that could be mobilized to create a synthetic image. Others such as the EqualAIs initiative are exploring how similar tools could be used to impede increasingly pervasive facial recognition and preserve some forms of visual anonymity for vulnerable individuals (another concern of WITNESS’ within our Tech + Advocacy program).

Consider the pros and cons of an immutable authentication trail, particularly for high profile individuals

As suggested by Bobby Chesney and Danielle Citron, this concept of using lifelogging to voluntarily track movements and action to provide the potential of a rebuttal to a deepfake via “a certified alibi credibly proving he or she did not do or say the thing depicted” might have applications for particular niche or high-profile communities, e.g. celebrities and other public figures although not without significant collateral damage to privacy and the possibility of facilitating government surveillance.

Ensure communication between key affected communities and the AI industry

The most-affected by mal-uses of synthetic media will be vulnerable societies where misinformation and disinformation are already rife, where levels of trust are low and there are few institutions for verification and fact-checking. Many of the incidents of ‘digital wildfire’ where recycled or lightly edited images have spread violence have recently taken place in the context of closed messaging apps such as WhatsApp in India.

Most recently in the human rights space, there has been mobilization in the Global South Facebook Coalition to push Facebook to listen more closely and resource and act on to real-world harms in societies such as Myanmar/Burma and Sri Lanka. These groups, and the likely risks and particular threat paradigms in these societies need to be at the center of solutions.

Confront shared root causes with other dis/mal/misinformation problems

There are shared root causes with other information disorder problems around how audiences understand and share mis and disinformation. There are also overlaps with broader societal conversation around micro-targeting of advertising and personalize content and how “attention economy” focused technologies reward fast-moving content and that are oriented towards an attention economy approach.

Develop industry and AI self-regulation and ethics training/codes, as well as 3rd party review boards.

As part of the broader discussion of AI and ethics, there could be a stronger emphasis on training on human rights and dual-use implications of synthetic media tools (for example, drawing on operationalization of the Toronto Principles on AI); this could include discussion of these in research papers and of use of independent, empowered 3rd party review boards.

Pursue existing and novel legal, regulatory and policy approaches

Professors Bobby Chesney and Danielle Citron have recently published an advance draft of “Deep Fakes: A Looming Challenge for Privacy, Democracy, and National Security,” a paper outlining an extensive range of primarily US-centric legal, regulatory and policy options that could be considered. Legal options includes new narrowly targeted prohibition on certain intentionally harmful deepfakes, the use of defamation or fraud law, civil liability including the possibility of suing creators or platforms for content (including via potential amendments to CDA Section 230), the utilization of copyright law or right to publicity, as well as criminal liability. Within the US there might be potential limited roles for the Federal Trade Commission, the Federal Communications Commission and the Federal Elections Commission.

Other areas to consider that have been raised elsewhere include re-thinking image-based sexual abuse legislation as well as in certain circumstances and jurisdictions expanding post-mortem publicity rights or utilizing the right to be forgotten around circulated images. The options that would be available globally and in other jurisdictions than the US remain under-explored.

Are there additional areas of solutions that we should be including or encouraging? Let us know via contacting sam@witness.org.

Based on these solution-areas we have been making the following recommendations for next steps:

- Baseline research and a focused sprint on the optimal ways to track authenticity, integrity, provenance and digital edits of images, audio, and video from capture to sharing to ongoing use. Research should focus on a rights-protecting approach that a) maximizes how many people can access these tools, b) minimizes barriers to entry and potential suppression of free speech without compromising right to privacy and freedom of surveillance c) minimizes risk to vulnerable creators and custody-holders and balances these with d) potential feasibility of integrating these approaches in a broader context of platforms, social media and in search engines. This research needs to reflect platform, independent commercial and open-source activist efforts, consider the use of blockchain and similar technologies, review precedents (e.g. spam and current anti-disinformation efforts) and identify pros and cons to different approaches as well as the unanticipated risks. WITNESS will lead on supporting this research and sprint.

- Detailed threat modeling around synthetic media mal-uses for particular key stakeholders (journalists, human rights defenders, others). Create models based on actors, motivations and attack vectors, resulting in the identification of tailored approaches relevant to specific stakeholders or issues/values at stake.

- Public and private dialogue on how platforms, social media sites, and search engines design a shared approach and better coordinate around mal-uses of synthetic media. Much like the public discussions around data use and content moderation, there is a role for third parties in civil society to serve as a public voice on pros/cons of various approaches, as well as to facilitate public discussion and serve as a neutral space for consensus building. WITNESS will support this type of outcomes-oriented discussion.

- Platforms, search and social media companies should prioritize development of key tools already identified in the OSINT human rights and journalism community as critical; particularly reverse video search. This is because many of the problems of synthetic media relate to existing challenges around verification and trust in visual media.

- More shared learning on how to detect synthetic media that brings together existing practices from manual and automatic forensics analysis with human rights, Open Source Intelligence (OSINT) and journalistic practitioners—potentially via a workshop where they test/learn each other’s methods and work out what to adopt and how to make techniques accessible. WITNESS and First Draft will engage on this.

- Prepare for the emergence of synthetic media in real-world situations by working with journalists and human rights defenders to build playbooks for upcoming risk scenarios so that no-one can claim “we didn’t see this coming” and so as to facilitate more understanding of technologies at stake. WITNESS and First Draft will collaborate on this.

- Include additional stakeholders who were under-represented in the June 11, 2018 convening and are critical voices either in an additional meeting or in upcoming activities including:

- Global South voices as well as marginalized communities in U.S. and Europe.

- Policy and legal voices and at national and international level.

- Artists and provocateurs.

- Additional understanding of relevant research questions and lead research to inform other strategies. First Draft will lead additional research.